Meet the Team: Jonathan Chirwa, Software Developer

VIA’s Meet the Team blog series features Q&A-style interviews with our team members to learn what a typical work day looks like for each individual and some fun facts like their favorite foods.

What does a typical day at VIA look like for you?

My day always begins with a warm cup of coffee and sending a “good morning” message on Slack signaling my availability for the day. Then, I review yesterday’s work to help prioritize tasks for the day and I also attend two daily standup meetings with the team. After standup, I get to work! A typical day ranges in terms of tasks, from lone/pair programming, preparing a presentation/demo, or researching a technology to use in a feature, like OAuth2 framework to improve the security of our user management system in authorizing and authenticating users.

What project have you worked on at VIA that you are most proud of?

The first project I worked on at VIA was a logging project that improves program observability and helps developers easily debug. I’m proud to say that after completion of the project, other teams at VIA have been using it as part of their daily routine. I love that I was able to contribute to the whole team’s productivity early on at VIA.

What’s something everyone may not (but should!) know about working at VIA?

Since our work life has been remote in the last year, companies have been finding ways to motivate teams and keep company culture at the same, if not higher, level. I think our team has come up with a creative idea: VIA awards teammates with VIA-bucks (points) when something happens that is unique to working remotely like forgetting to unmute yourself or having your pet interrupt a video call. This has really eased the transition to online meetings. Pretty cool right? 🙂

What’s your favorite VIA memory?

My favorite memories at VIA are almost always from our team events. For example, our team played a game called “ling your language” where we listened to short clips of people speaking in different languages across the world and had to identify the language from a given set of options. During the game, I left it to my fellow team members who are from or have lived in parts of the world like the Middle East, Europe, Asia, North or South America, to identify the language in the clip. Vice versa, I would help more in identifying African languages.

Apart from earning some VIA-bucks (which we will redeem for a group event once we are back in person together), it reminded me of how diverse the VIA team is and how we work best when we are collaborating.

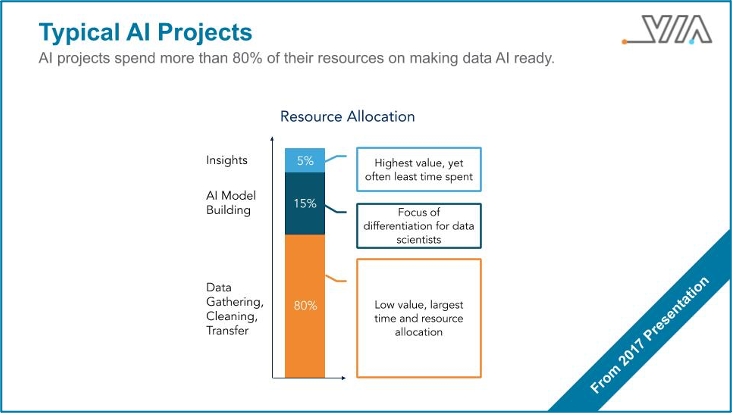

What makes VIA’s technology unique compared to others?

The idea of sharing code instead of data to compute some analytics is something I have never heard of, until I learned of VIA. In this world where data is gold but there are a lot of valid privacy and security concerns, our TAC™ technology is a game changing solution.

If you were given an extra hour in your day, what would you spend it doing?

Great question! If I were to live in a 25 hours/day Earth 2.0 world, I would spend that extra hour reading a good book. This may be spiritual, philosophical, historical or a how-to like Atomic Habits, which teaches useful techniques to develop new “good” habits and stop potentially “bad” habits.

What’s one fun fact that you have learned about clean energy that you didn’t know before joining VIA?

During my first week at VIA, I had several onboarding meetings with teammates ranging from discussions about social media to our tech stack. In one particular meeting, we covered the energy landscape. I was surprised and excited to learn that 2020 was the first year that clean energy was cheaper to produce than non-renewable/fossil energy.

We have to ask, what is your go-to food?

My go-to food is a beef burger. I haven’t mastered a homemade one yet, maybe because I tasted Montreal’s “Burger Royal” which set the bar too high.